Overview

Analytics data is always scoped to the currently selected project and is refreshed from the API with results cached for up to 5 minutes.Accessing Analytics

To access the Analytics section:- Click on the Settings icon in the main navigation sidebar.

- Select Analytics from the settings menu (listed below Users).

-

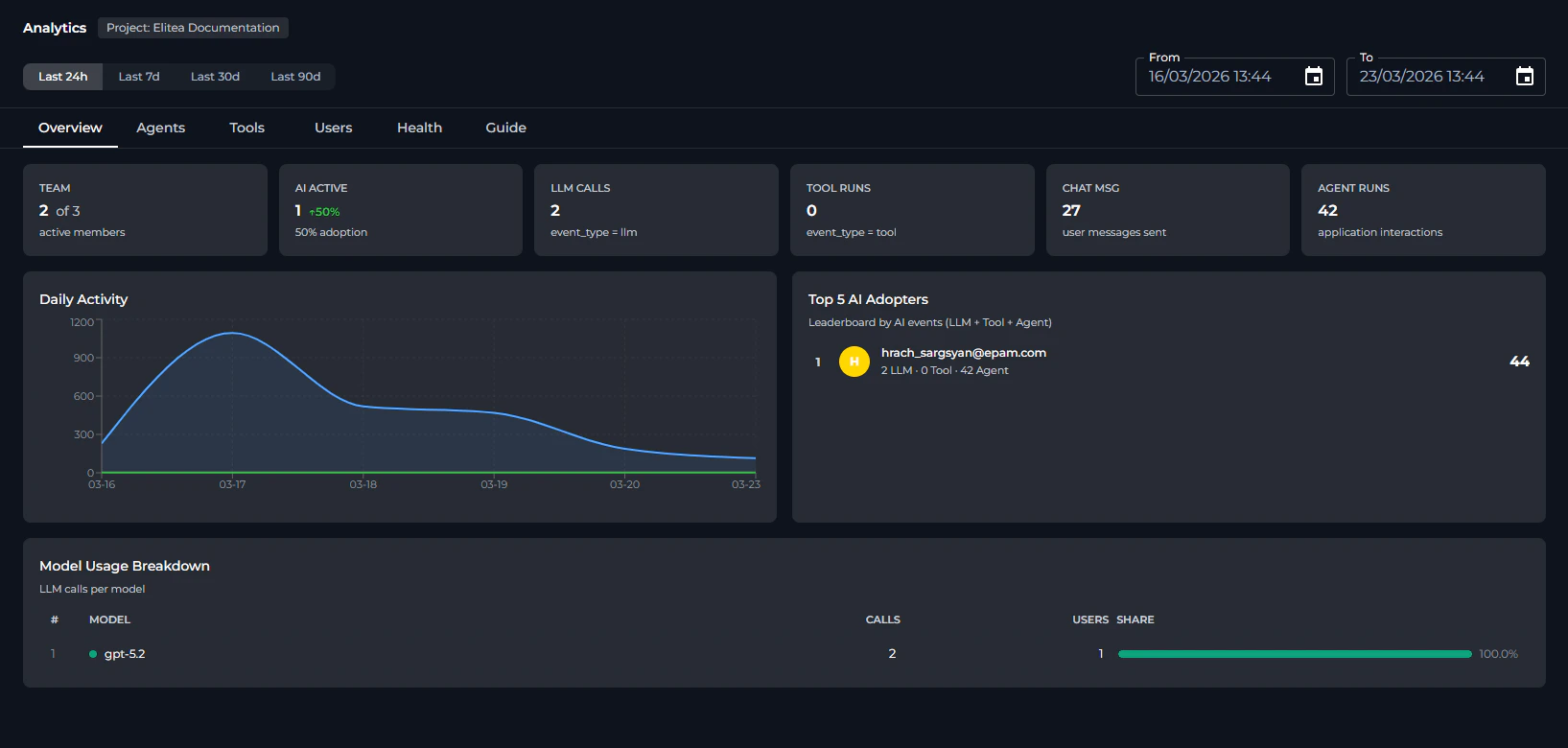

The Analytics dashboard will display, showing the project name next to the page title and the date filter bar at the top.

Analytics Interface Overview

Once opened, the Analytics page is composed of the following elements:- Page header — Displays the title “Analytics” and the name of the currently selected project.

- Date filter bar — Located directly below the header; contains the four quick-preset buttons (Last 24h, Last 7d, Last 30d, Last 90d) on the left and the From / To datetime pickers on the right.

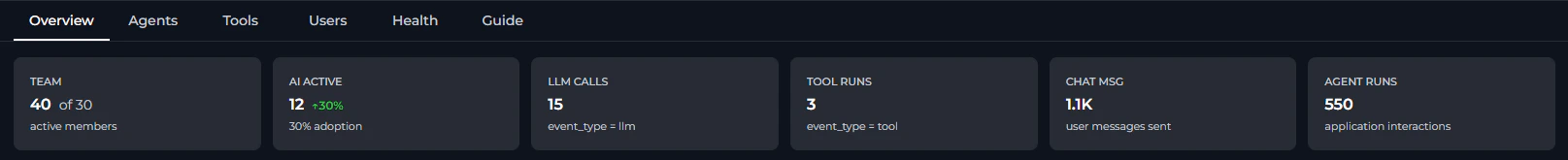

- Tab bar — A row of six tabs (Overview, Agents, Tools, Users, Health, Guide) used to switch between different analytics views.

-

Content area — The main scrollable panel that renders KPI cards, charts, and tables for the active tab.

All metrics and charts show data only for the currently selected project. To view analytics for a different project, switch projects using the project selector in the sidebar before opening Analytics.

Date Range Controls

At the top of the Analytics page, a filter bar lets you control the time window applied to all tabs. Quick Presets Four preset buttons let you set the date range with a single click:

| Preset | Date Range |

|---|---|

| Last 24h | Previous 24 hours |

| Last 7d | Previous 7 days (default on page load) |

| Last 30d | Previous 30 days |

| Last 90d | Previous 90 days |

- From — Start of the analysis period. Cannot be set later than the To value.

-

To — End of the analysis period. Cannot be set earlier than the From value.

The page loads with Last 7d pre-selected. Re-selecting a preset button instantly refreshes all tab data for that window without any additional action.

Analytics Tabs

The Analytics dashboard is divided into six tabs. Click any tab label to switch views.Overview Tab

The Overview tab presents project-wide KPI cards and summary charts for the selected date range. KPI Cards

| KPI | Card | Description | Calculation |

|---|---|---|---|

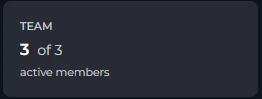

| TEAM |  | Active members (X) out of all users ever seen in the project (Y). | X = distinct users with ≥ 1 event in range; Y = distinct users all-time. |

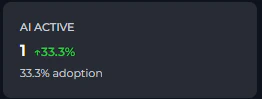

| AI ACTIVE |  | Users with at least one LLM, tool, or agent event in the period. Includes an adoption rate badge (e.g. ↑ 72%) = AI ACTIVE / TEAM × 100%. | Distinct users where event_type in (llm, tool) or entity_type = application. |

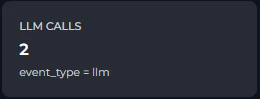

| LLM CALLS |  | Total number of Large Language Model invocations (prompts sent to AI models). | Count of events where event_type = "llm". |

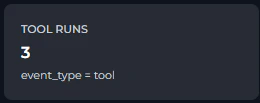

| TOOL RUNS |  | Total number of tool executions triggered by agents or users. | Count of events where event_type = "tool". |

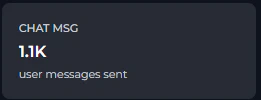

| CHAT MSG |  | Total user messages sent in the chat interface. | Count of events where action = "SIO chat_predict". |

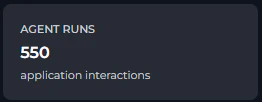

| AGENT RUNS |  | Total interactions with agents and pipelines. | Count of events where entity_type = "application". |

Overview Charts

Below the KPI cards, three chart sections are displayed side by side or stacked, depending on screen size:- Daily Activity — Area chart with two series:

- Events (blue): total platform events per day.

-

Users (green): unique active users per day.

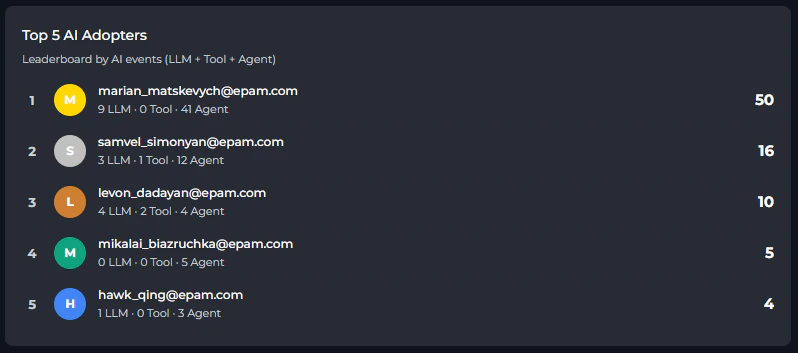

- Top 5 AI Adopters — Leaderboard table showing the five users with the most combined AI events (LLM + Tool + Agent). Each row displays:

- Rank and color-coded avatar (gold/silver/bronze for top 3).

- User email.

-

Per-type breakdown:

N LLM · N Tool · N Agent. -

Total AI events score.

-

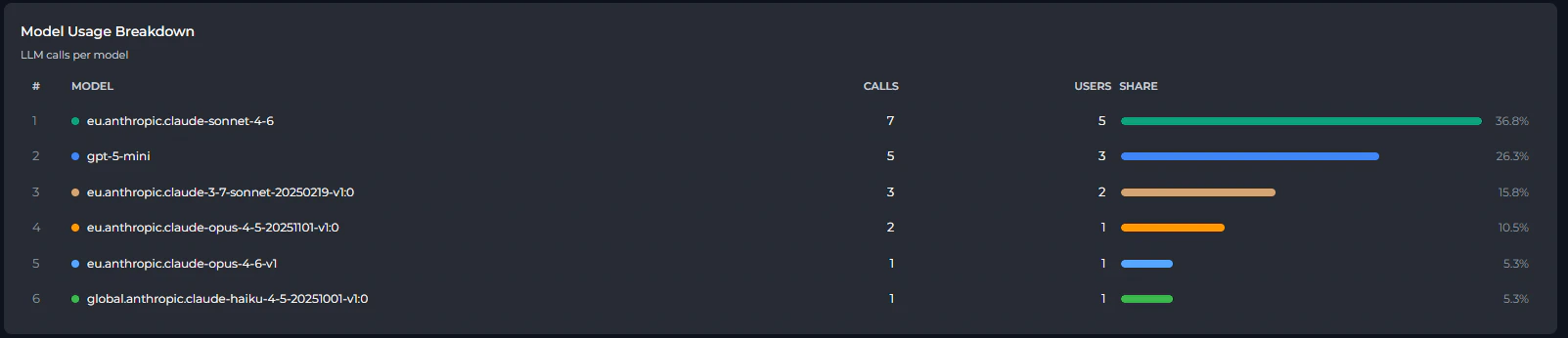

Model Usage Breakdown — Table ranking all LLM models used in the project by call volume. Columns include model name, total calls, distinct users, and a share bar showing each model’s percentage of total LLM calls.

Agents Tab

The Agents tab shows how individual agents (applications) are being used within the project.

-

An area chart showing the number of user messages (

SIO chat_predictevents) sent per day. Useful for tracking chat engagement trends independent of agent-specific metrics.

-

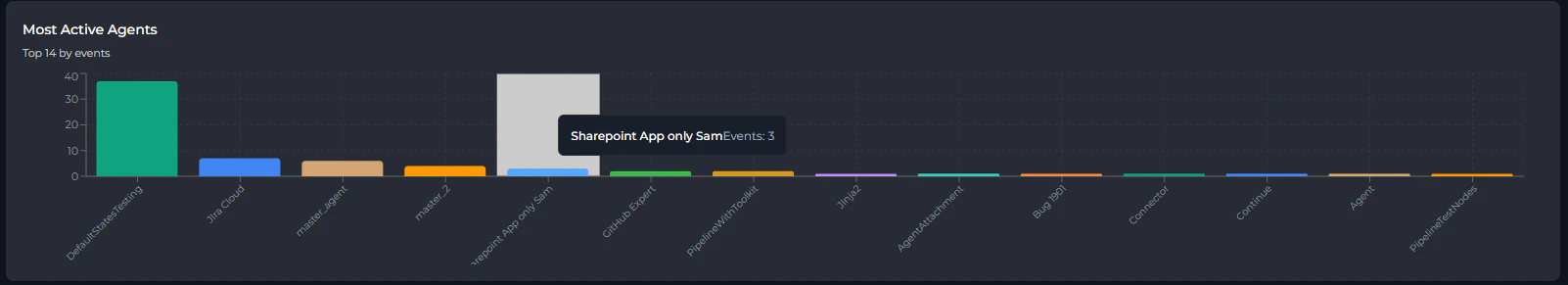

A bar chart showing the top 20 agents ranked by total event count. Each bar is color-coded and labeled with the agent name.

-

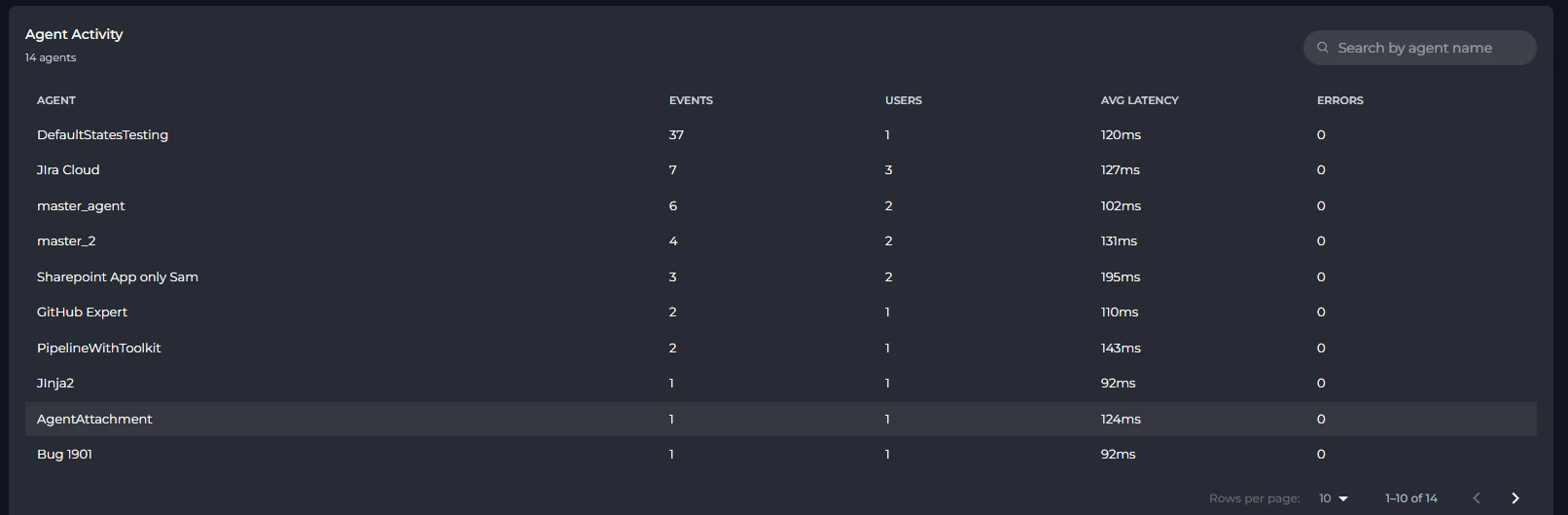

A paginated, searchable table listing all agents in the project:

Column Description Agent Agent name (clickable to open Agent Detail View) Events Total events triggered by this agent Users Number of distinct users who interacted with it Avg Latency Mean execution time (displayed as XmsorX.Xs)Errors Error count (highlighted in red when > 0)

Agent Detail View

Click any agent row to open a drill-down view showing:- Header: Agent name with a back arrow to return to the full list.

- KPI Cards: Total Events, Unique Users, Avg Latency, Errors, Error Rate (error rate highlighted in red when > 5%).

- Daily Usage Chart: Area chart showing events and errors per day for this agent.

- Users table: Lists each user who interacted with the agent, with per-user events, average latency, and errors.

-

Tools table: Lists each tool called by this agent, with call count.

Tools Tab

The Tools tab surfaces usage patterns for individual tools executed by agents or users.

| Column | Description |

|---|---|

| Tool | Tool name (clickable to open Tool Detail View) |

| Calls | Total number of times the tool was called |

| Users | Distinct users who triggered this tool |

| Avg Latency | Mean execution time |

| Errors | Error count (highlighted in red when > 0) |

Tool Detail View

Click any tool row to open a drill-down view:- KPI Cards: Total Calls, Unique Users, Avg Latency, Errors, Error Rate.

- Daily Usage Chart: Area chart showing calls and errors per day for this tool.

- Users table: Users who called this tool, with per-user calls, average latency, and errors.

-

Agents table: Agents that used this tool, with call count (resolved by correlating trace IDs).

Users Tab

The Users tab enables per-person analysis of platform activity.

| Column | Description |

|---|---|

| User | User email (or User <ID> if email is unavailable; clickable) |

| Events | Total events across all types |

| Days | Number of distinct calendar days the user was active |

| LLM | LLM call count |

| Tool | Tool execution count |

| Agent | Agent interaction count |

| Chat Msg | Chat message count |

| Errors | Error count (red when > 0) |

User Detail View

Click any user row to open a drill-down view:- Header: User email with a back arrow.

- KPI Cards: LLM Calls, Tool Calls, Chat Msg, Agent Runs, Active Days, Errors.

- Daily Activity Chart: Area chart with four series — Chat Msg (purple), LLM (blue), Tool (green), Agent (orange) — showing how this user’s activity is distributed over time.

- Models Used list: AI models this user queried, with call counts.

- Tools Used list: Tools this user triggered, with call counts.

- Agents Used list: Agents this user interacted with, with run counts.

Health Tab

The Health tab provides system reliability metrics, helping you identify error patterns and latency issues.

- Total Requests (blue): all events per day.

- Errors (red): events that resulted in an error per day.

| Column | Description |

|---|---|

| Event Type | Category of platform event (color-coded dot) |

| Total | Total events of this type |

| Errors | Error count (red when > 0) |

| Error Rate | Errors / Total × 100% (red when > 5%) |

| Avg Latency | Mean duration in ms or seconds |

| Event Type | Color | Meaning |

|---|---|---|

api | Blue | HTTP REST API calls (UI actions, data fetches) |

socketio | Teal | WebSocket events (chat, real-time features) |

llm | Purple | AI model calls (Claude, GPT, Gemini, etc.) |

tool | Orange | Tool executions (Jira, Slack, web search, etc.) |

agent | Green | Agent workflow invocations |

rpc | Yellow | Internal service-to-service calls |

High latency on

llm events is normal (model inference takes time). Elevated latency on api or rpc calls may indicate infrastructure or configuration issues worth investigating.Guide Tab

The Guide tab is a built-in metric glossary embedded directly in the Analytics page. It explains every KPI, chart, and table column with:

- Description: What the metric represents in plain language.

- Calculation: The exact formula used to compute the value.

- Data source: Which event types or platform actions contribute to the metric.

- Overview Tab metrics

- Overview Charts

- Agents Tab metrics

- Tools Tab metrics

- Users Tab metrics

- Health Tab metrics

- General Concepts (event types, date range behavior, project scope)

Limitations

| Limitation | Details |

|---|---|

| Data freshness | API responses are cached for 5 minutes. Switching tabs or adjusting the date range within that window may serve cached data. |

| Overview and Health data shared | The Overview and Health tabs use the same underlying API call. Switching between them does not trigger a new request while cached data is still fresh. |

| Agent / Tool / User tabs are independent | Each tab fetches data separately with server-side pagination. Navigation within a tab (pagination, search) triggers new API requests. |

| Top charts limited to 20 items | The Most Active Agents and Most Popular Tools bar charts always show the top 20 items only. Use the paginated table below each chart to browse additional entries. |

| Trace-ID correlation | Tool-to-agent associations (in detail views) are resolved by matching trace_id values. This is only available when trace IDs are correctly propagated by the SDK. |

| Project-only scope | There is no cross-project or portfolio-level view within Analytics. For cross-project analysis, use the Monitoring section in Settings. |

Practical Examples

Measuring AI Adoption Across Your Team

Measuring AI Adoption Across Your Team

- Open Settings → Analytics.

- Select the Last 30d preset.

- On the Overview tab, read the TEAM and AI ACTIVE KPI cards.

- The Adoption Rate badge (e.g.,

↑ 72%) tells you what proportion of your registered team members actively used AI features in the past 30 days. - Scroll to the Top 5 AI Adopters leaderboard to identify your most active members — click any name to drill into their individual activity in the Users tab.

Identifying Underused or Overloaded Agents

Identifying Underused or Overloaded Agents

- Open the Agents tab.

- Use the Most Active Agents bar chart to quickly see which agents receive the most traffic.

- In the Agent Activity table, sort mentally by the Events column (highest first by default) to find heavily used agents.

- Click any agent to open its detail view and check Avg Latency and Error Rate. A high error rate (> 5%) highlights agents that may need debugging or configuration review.

- Agents with zero events in the selected period may be deprecated or not yet discovered by your team.

Auditing Tool Usage and Reliability

Auditing Tool Usage and Reliability

- Open the Tools tab.

- The Most Popular Tools chart gives an at-a-glance view of which integrations are relied upon most.

- In the Tool Details table, look for tools with a non-zero Errors count (displayed in red).

- Click a tool with errors to open its detail view: the Daily Usage chart reveals when errors spiked, and the Users sub-table shows which users encountered them.

- Cross-reference with the Health tab to check the system-wide error rate for the

toolevent type during the same period.

Reviewing an Individual User's Activity

Reviewing an Individual User's Activity

- Open the Users tab.

- Use the search box to filter by the user’s email address.

- Click the user’s row to open their detail view.

- Review the Daily Activity area chart to see on which days they were most active and which event types dominate.

- The Models Used, Tools Used, and Agents Used lists show exactly which platform resources this user engaged with — useful for onboarding support or license reviews.

Investigating an Error Spike

Investigating an Error Spike

- Open the Health tab.

- In the Requests vs Errors chart, identify the date range of the error spike.

- In the Health by Event Type table, find the row with the highest Error Rate (values > 5% are highlighted in red).

- Note the event type (e.g.,

llmortool) and navigate to the corresponding tab (Agents or Tools) to identify which specific agent or tool was responsible. - Narrow the date range using the From/To pickers to focus on the spike period, then re-examine the relevant tab.

Best Practices

Start with a Meaningful Date Range

Start with a Meaningful Date Range

Before reading any metrics, set the date range that matches your analysis goal. Use Last 7d for recent activity reviews, Last 30d for monthly reporting, and custom pickers for audit periods tied to specific events or releases.

Use the Guide Tab Before Drawing Conclusions

Use the Guide Tab Before Drawing Conclusions

Open the Guide tab to review exact metric definitions and calculation formulas. Metrics like TEAM (active users vs. total ever seen) and AI ACTIVE (LLM/Tool/Agent users only) have specific scopes that affect interpretation.

Combine Overview and Users Tabs for Adoption Analysis

Combine Overview and Users Tabs for Adoption Analysis

Use the Overview tab to get the aggregate adoption picture, then drill down via the Top 5 AI Adopters leaderboard or the Users tab to identify specific individuals for coaching or recognition.

Monitor Health Regularly

Monitor Health Regularly

Review the Health tab after deployments or integrations changes. A sudden increase in

llm or tool error rates often signals a misconfigured AI model or a broken external service credential.Cross-Reference Agents and Tools Tabs

Cross-Reference Agents and Tools Tabs

When an agent shows high errors in the Agents tab, open its detail view and check the Tools sub-table. Then visit the Tools tab, click the problematic tool, and check which other agents also rely on it — helping you assess the blast radius of a broken integration.

Leverage Cross-Tab Navigation

Leverage Cross-Tab Navigation