The available settings and configurations may vary depending on the project selected. Select the appropriate project from the project dropdown menu to access specific configurations relevant to that project.

Accessing AI Configuration

To access the AI Configuration section:- Click on the Settings icon in the main navigation sidebar.

- Select AI Configuration from the settings menu.

- The AI Configuration interface will display with two tabs available:

- AI Configuration - Main configuration and integrations management

- OpenAI Template - Code examples and templates

AI Configuration Tab

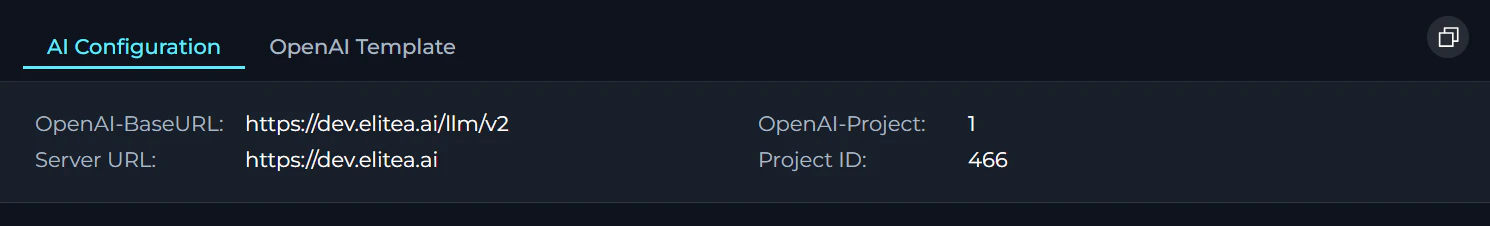

Server Configuration Fields

The top section displays essential server and project information with click-to-copy functionality:

| Field | Description |

|---|---|

| OpenAI-BaseURL | The API endpoint URL for OpenAI-compatible services (formatted as {server_url}/llm/v2) |

| OpenAI-Project | The project identifier used for OpenAI API compatibility |

| Server URL | The base server URL for your ELITEA instance |

| Project ID | The unique identifier for your current project |

Integrations

The Integrations section displays all configured AI service integrations organized by type. Click the + button in the header to create new integrations.LLM Models

| Setting | Description | Examples |

|---|---|---|

| Default ⓘ | Used for most activities by default. Start here; switch to Low-tier or High-tier when needed | General purpose models |

| High-tier ⓘ | More capable (and more expensive) models for complex workflows (multi-step reasoning, heavy tool usage) | Anthropic Sonnet 4.5/Opus, OpenAI GPT-5.2, Google Gemini 3 Pro |

| Low-tier ⓘ | Cheaper/faster models for routine tasks (diagram fixing, formatting, simple edits) | GPT mini from OpenAI, Gemini Flash from Google, Anthropic Haiku |

Each LLM configuration displays:

- Integration Icon - Visual indicator of the provider (OpenAI, Azure, Vertex AI, Claude, Amazon Bedrock, Ollama, HuggingFace, AI Dial, etc.)

- Model Name - Display name of the configured model

- Status Indicator - Shows “OK • Shared” or “OK • Local” depending on sharing status

- Tier Badges - If applicable, displays “High-Tier”, “Low-Tier”, or “Default” badge

- Click to Edit - Click on any card to view or edit configuration details (if permissions allow)

Creating a New LLM Model:

- Ensure Credentials Exist - Before adding a model, create AI credentials for your provider (see AI Credentials section)

- Click the + Button - In the Integrations section header (or main navigation sidebar), click the + button

- Select Integration Type - Choose LLM Model from the list of available integration types

-

Fill Required Fields:

Field Description Display Name Enter a friendly name for the model (e.g., “GPT-4o Production”) ID* Auto-populated from the Display Name Name* Enter the exact model identifier from the provider (e.g., “gpt-4o”, “claude-3-sonnet-20240229”) Context Window Specify the maximum context window size in tokens (e.g., 128000) Max Output Tokens Set the maximum output token limit (e.g., 16000) Supports Reasoning Enable if the model supports reasoning capabilities (e.g., for GPT-5.1 o3-mini) Supports Vision Enable if the model can process and understand images Low Tier Mark this model as suitable for routine, cost-effective tasks High Tier Mark this model as suitable for complex workflows requiring advanced capabilities Credentials Select the AI credential configuration from the dropdown - Click Save - The model will be created and appear in the LLM Models section

- Set as Default (Optional) - Use the dropdown in Default Model Settings to assign this model as the Default model

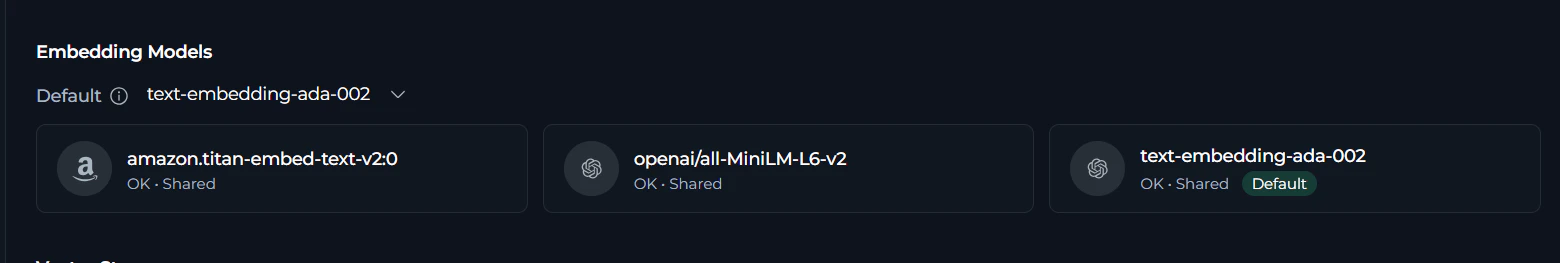

Embedding Models

Each embedding model configuration displays:

- Integration Icon - Visual indicator of the provider (OpenAI, Azure, HuggingFace, etc.)

- Model Name - Display name of the configured embedding model

- Status Indicator - Shows “OK • Shared” or “OK • Local” depending on sharing status

- Click to Edit - Click on any card to view or edit configuration details (if permissions allow)

Creating a New Embedding Model:

- Ensure Credentials Exist - Before adding a model, create AI credentials for your provider (see AI Credentials section)

- Click the + Button - In the Integrations section header (or main navigation sidebar), click the + button

- Select Integration Type - Choose Embedding Model from the list of available integration types

-

Fill Required Fields:

Field Description Display Name Enter a descriptive name (e.g., “OpenAI Embeddings Large”) ID* Auto-populated from the Display Name Name* Enter the exact model identifier (e.g., “text-embedding-3-large”, “text-embedding-ada-002”) Credentials Select credentials from the dropdown - Click Save - The embedding model will be created and available for selection

- Set as Default (Optional) - Use the “Default embedding model” dropdown to make this the default for RAG operations

- OpenAI: text-embedding-3-large (3072 dims), text-embedding-3-small (1536 dims), text-embedding-ada-002 (1536 dims)

- Azure OpenAI: Same models as OpenAI, use your Azure deployment name

- HuggingFace: sentence-transformers/all-MiniLM-L6-v2 (384 dims), BAAI/bge-large-en-v1.5 (1024 dims)

- Vertex AI: textembedding-gecko@003 (768 dims)

Vector Storage

Each vector storage configuration displays:

- Integration Icon - Visual indicator of the provider (PGVector, Chroma, etc.)

- Storage Name - Display name of the configured vector storage

- Status Indicator - Shows “OK • Shared” or “OK • Local” depending on sharing status

- Click to Edit - Click on any card to view or edit configuration details (if permissions allow)

Creating a New Vector Storage Configuration:

- Click the + Button - In the Integrations section header (or main navigation sidebar), click the + button

- Select Integration Type - Choose Vector Storage from the list of available integration types

- Select Vector Storage Provider - Choose PGVector (PostgreSQL extension for vector storage)

-

Fill Required Fields:

Field Description Display Name Enter a descriptive name (e.g., “Production PGVector”) ID* Auto-populated from the Display Name Connection String PostgreSQL connection string - Click Save - The vector storage configuration will be created

- Set as Default (Optional) - Use the “Default vector storage” dropdown to make this the default

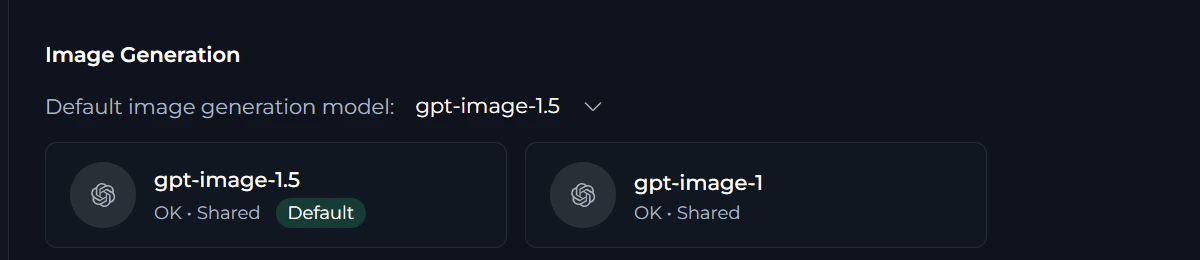

Image Generation

Each image generation model configuration displays:

- Integration Icon - Visual indicator of the provider (OpenAI DALL-E, Azure, etc.)

- Model Name - Display name of the configured image generation model

- Status Indicator - Shows “OK • Shared” or “OK • Local” depending on sharing status

- Click to Edit - Click on any card to view or edit configuration details (if permissions allow)

Creating a New Image Generation Model:

- Ensure Credentials Exist - Before adding a model, create AI credentials for your provider (see AI Credentials section)

- Click the + Button - In the Integrations section header (or main navigation sidebar), click the + button

- Select Integration Type - Choose Image Generation Model from the list of available integration types

-

Fill Required Fields:

Field Description Display Name Enter a descriptive name (e.g., “DALL-E 3 Standard”) ID* Auto-populated from the Display Name Name* Enter the exact model identifier (e.g., “dall-e-3”, “dall-e-2”) Credentials Select the appropriate AI credential from the dropdown - Click Save - The image generation model will be created and available

- Set as Default (Optional) - Use the “Default image generation model” dropdown to set as default

- OpenAI DALL-E 3: High-quality image generation with text rendering capabilities

- OpenAI DALL-E 2: Fast image generation with good quality

- Azure OpenAI: DALL-E models through Azure endpoints

- Other Providers: Stability AI, Midjourney (if configured with appropriate integrations)

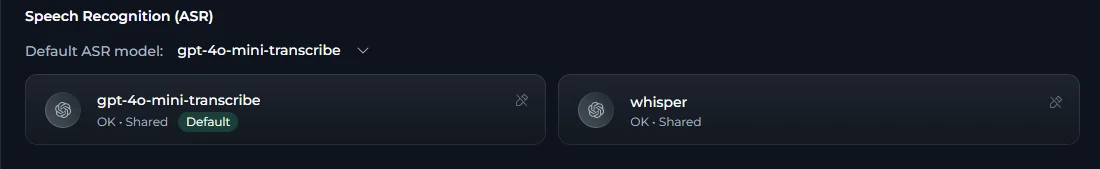

Speech Recognition (ASR)

| Model Type | Name Pattern | Behavior |

|---|---|---|

| Streaming (Realtime) | Names that do not include whisper or transcribe | Low-latency streaming via OpenAI Realtime API; interim transcript results appear in real time |

| Batch (Whisper) | Names that include whisper or transcribe (e.g., whisper-1, gpt-4o-transcribe) | Batch HTTP transcription; audio is buffered and sent in chunks, transcript delivered after processing |

Each ASR configuration card displays:

- Integration Icon — Visual indicator of the provider

- Model Name — Display name of the configured ASR model

- Status Indicator — Shows “OK • Shared” or “OK • Local” depending on sharing status

- Click to Edit — Click on any card to view or edit configuration details (if permissions allow)

Creating a New ASR Model:

- Ensure Credentials Exist — Before adding an ASR model, create AI credentials for your provider (see AI Credentials section)

- Click the + Button — In the Integrations section header, click the + button

- Select Integration Type — Choose Speech Recognition (ASR) from the list of available integration types

-

Fill Required Fields:

Field Description Display Name Enter a descriptive name (e.g., “OpenAI Whisper”, “Realtime Transcription”) ID* Auto-populated from the Display Name Name* Enter the exact model identifier from the provider (e.g., whisper-1,gpt-4o-transcribe). Names containingwhisperortranscribeare treated as batch models; all others are treated as streaming/Realtime modelsCredentials Select the AI credential configuration from the dropdown - Click Save — The ASR model will be created and appear in the Speech Recognition (ASR) section

-

Set as Default (Optional) — Use the Default ASR model dropdown to make this the default for voice input

The model name determines transcription mode. Use

whisper-1 or gpt-4o-transcribe for batch transcription. For real-time streaming, configure a model from the OpenAI Realtime API (e.g., via an AI Dial or Azure OpenAI credential supporting Realtime).Text to Speech (TTS)

- If no TTS model is configured, the browser SpeechSynthesis API is used as a fallback.

Each TTS configuration card displays:

- Integration Icon — Visual indicator of the provider

- Model Name — Display name of the configured TTS model

- Status Indicator — Shows “OK • Shared” or “OK • Local” depending on sharing status

- Click to Edit — Click on any card to view or edit configuration details (if permissions allow)

Creating a New TTS Model:

- Ensure Credentials Exist — Before adding a TTS model, create AI credentials for your provider (see AI Credentials section)

- Click the + Button — In the Integrations section header, click the + button

- Select Integration Type — Choose Text to Speech (TTS) from the list of available integration types

-

Fill Required Fields:

Field Description Display Name Enter a descriptive name (e.g., “OpenAI TTS”, “Azure Neural Voice”) ID* Auto-populated from the Display Name Name* Enter the exact model identifier from the provider (e.g., tts-1,tts-1-hd)Credentials Select the AI credential configuration from the dropdown - Click Save — The TTS model will be created and appear in the Text to Speech (TTS) section

-

Set as Default (Optional) — Use the Default TTS model dropdown to make this the default for voice output

Server-side TTS delivers audio as PCM-16-LE chunks via Socket.IO, played back through the Web Audio API at 24 kHz. Text is highlighted in sync with playback. Pause and resume are fully supported for both server-side and browser-fallback TTS.

AI Credentials

AI Credentials are authentication configurations that store API keys, tokens, endpoints, and connection strings for accessing AI service providers. These credentials serve as the foundation for other integrations, enabling secure communication with external AI platforms without exposing sensitive authentication details in individual model configurations. Multiple models and integrations can reference the same credentials, providing centralized credential management.Each AI Credentials configuration displays:

- Integration Icon - Visual indicator of the provider (OpenAI, Azure, Anthropic, Vertex AI, etc.)

- Credential Name - Display name of the configured credentials

- Status Indicator - Shows “OK • Shared” or “OK • Local” depending on sharing status

- Click to Edit - Click on any card to view or edit configuration details (if permissions allow)

Creating New AI Credentials:

- Click the + Button - In the Integrations section header (or main navigation sidebar), click the + button

- Select Integration Type - Choose AI Credentials from the list of available integration types

-

Select Provider Type - Choose the AI service provider you want to configure:

Provider Description AI Dial For EPAM AI Dial platform Amazon Bedrock For AWS AI services Azure OpenAI For Azure-hosted OpenAI services Ollama For local/self-hosted models OpenAI For OpenAI API access Vertex AI For Google Cloud AI services -

Fill Provider-Specific Fields:

For OpenAI:

For Azure OpenAI:

Field Description Example Display Name* Enter a descriptive name ”OpenAI Production Key” ID* Auto-populated from the Display Name Api Base* OpenAI API endpoint URL https://api.openai.com/v1Api Key Your OpenAI API key from platform.openai.com sk-proj-...For Vertex AI:Field Description Example Display Name* Enter a descriptive name ”Azure OpenAI East US” ID* Auto-populated from the Display Name Api Base* Azure OpenAI endpoint URL https://your-resource.openai.azure.comApi Key Azure OpenAI API key from Azure portal a1b2c3d4e5f6...Api Version Azure API version 2024-02-15-previewFor Amazon Bedrock:Field Description Example Display Name Enter a descriptive name ”GCP Vertex AI” ID* Auto-populated from the Display Name Vertex Project* Google Cloud project ID my-gcp-project-123456Vertex Location* GCP region us-central1Vertex Credentials* Paste the complete JSON content from downloaded service account key file {"type": "service_account", "project_id": "...", ...}For AI Dial:Field Description Example Display Name* Enter a descriptive name ”AWS Bedrock US-East-1” ID* Auto-populated from the Display Name Aws Access Key Id IAM access key AKIAIOSFODNN7EXAMPLEAws Secret Access Key IAM secret access key wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEYAws Region Name AWS region us-east-1For Ollama:Field Description Example Display Name Enter a descriptive name ”EPAM AI Dial Personal” ID* Auto-populated from the Display Name Api Base* AI Dial endpoint URL https://ai-proxy.lab.epam.comApi Key Your personal AI Dial token aidial_...Api Version API version for AI Dial 2025-04-01-previewField Description Example Display Name* Enter a descriptive name ”Local Ollama Server” ID* Auto-populated from the Display Name Api Base* Ollama server URL http://localhost:11434 - Click Save - The credential configuration will be created and available for use

Default Model Selection

The default model settings in the Integrations section control which models are automatically selected for new chats and agents:- Default Model - Used for most activities (new chats, agents, general operations)

- High-tier Model - Selected when high-capability processing is needed

- Low-tier Model - Used for routine, cost-effective tasks

- Default Embedding Model - Used for text embedding operations

- Default Vector Storage - Used for vector database operations

- Default Image Generation Model - Used for image generation tasks

- Default ASR Model - Used for server-side voice input (speech-to-text) in Chat, Agents, and Pipelines

- Default TTS Model - Used for reading AI responses aloud (text-to-speech) in Chat, Agents, and Pipelines

- New chat conversations use the default model unless manually changed

- New agents use the default model unless manually configured

- Existing conversations and agents retain their previously configured models

- Changes apply immediately without requiring save

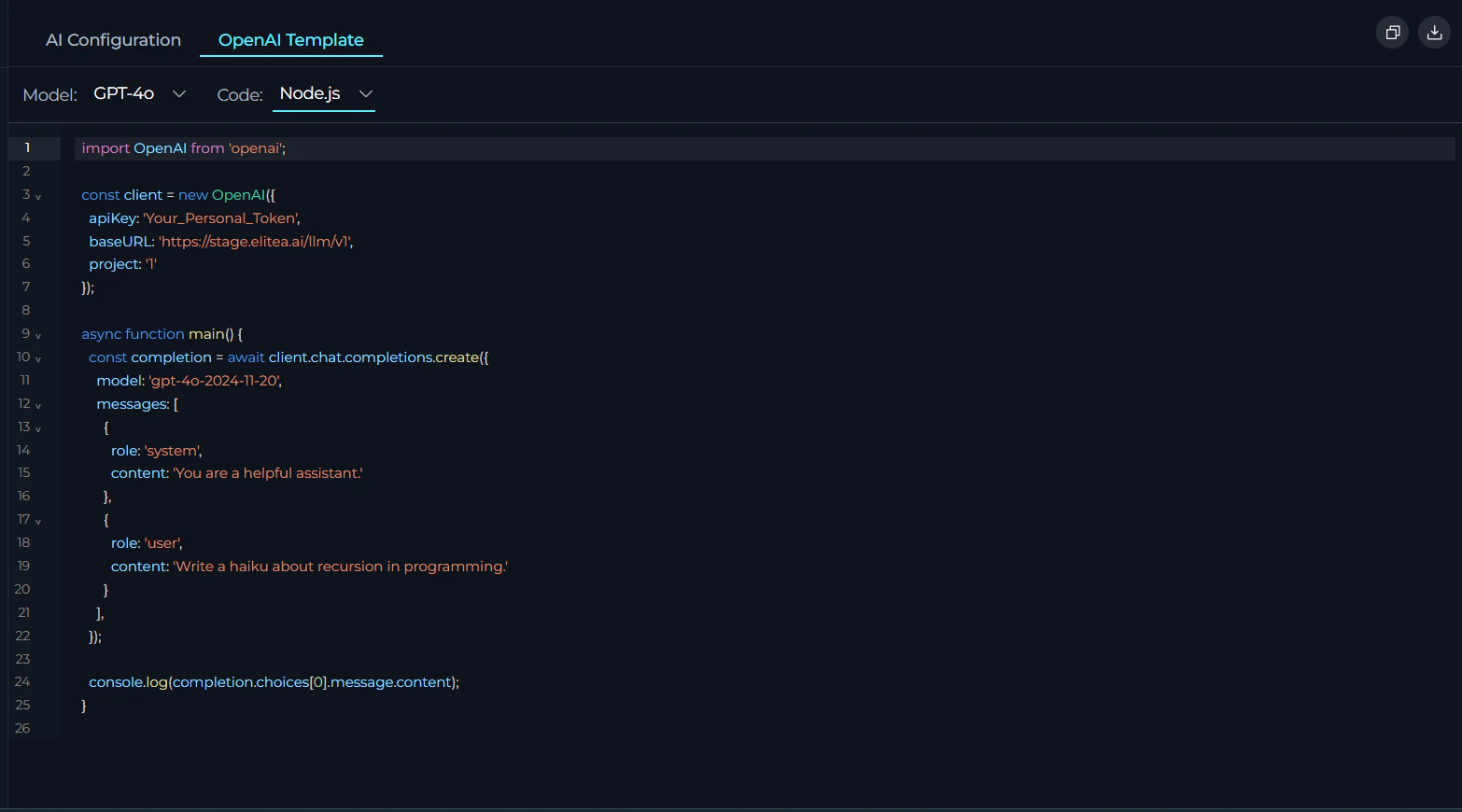

OpenAI Template Tab

The OpenAI Template tab generates ready-to-use code examples for integrating applications with ELITEA’s AI models using OpenAI-compatible API format. Templates are pre-configured with server settings and support cURL, Node.js, and Python, enabling quick implementation without manual setup. How to Access: Click “OpenAI Template” in the tab bar at the top of the AI Configuration page.

- Model dropdown - Select from available configured LLM models

- Code dropdown - Choose programming language (cURL, Node.js, or Python)

- Copy Button - Copy the code example to clipboard

- Download Button - Download code example file with appropriate extension:

- cURL →

api_example.sh - Node.js →

api_example.js - Python →

api_example.py

- cURL →

- Pre-configured with server URL, project ID, and model settings

- Dynamic updates based on selected model and language

- Includes authentication placeholder (

Your_Personal_Token) - Syntax highlighting for better readability

Troubleshooting

No Access to LLM Message

No Access to LLM Message

Problem: “No access to LLM” message is displayed when using custom LLM configurations. The exact message may vary depending on the provider.Solution Steps:

- Verify Provider Access: Use your model provider’s platform help center or documentation pages to confirm you have access to the specific LLM mentioned in your configuration

- Test Credentials: Use a CURL request to verify that your credentials can successfully access the model

- Check Model Availability: Ensure the model name exactly matches the provider’s model identifier

- Validate Authentication: Confirm your API keys, tokens, or authentication credentials are valid and not expired

Authentication Failed Errors

Authentication Failed Errors

Problem: Receiving 401 (Unauthorized) or 403 (Forbidden) errors when testing credentials.Solution Steps:

-

Check Credential Values:

- Verify API keys, tokens, or passwords are correct

- Ensure there are no extra spaces or hidden characters

- Confirm credentials haven’t expired

-

Verify Permissions:

- Ensure your account has necessary permissions for the integration

- Check if you need specific scopes or roles enabled

- Verify service URLs are accurate and accessible

-

Provider-Specific Checks:

- OpenAI: Verify your API key starts with

sk-and is from the correct organization - Azure OpenAI: Confirm the API version matches your deployment and endpoint URL is correct

- Vertex AI: Ensure the service account JSON has the required IAM permissions

- Amazon Bedrock: Verify IAM user has

bedrock:InvokeModelpermissions

- OpenAI: Verify your API key starts with

Connection Errors

Connection Errors

Problem: Unable to connect to AI provider endpoints (Connection refused, timeout, SSL errors).Solution Steps:

-

Network Connectivity:

- Verify you can reach the endpoint URL from your network

- Check if there are firewall or proxy restrictions

- Ensure the endpoint URL is correct and accessible

-

SSL/Certificate Issues:

- Verify the endpoint uses a valid SSL certificate

- Check if your organization uses custom certificates

- For self-hosted solutions, ensure certificates are properly configured

-

URL Format:

- Ensure URLs start with

http://orhttps:// - Remove trailing slashes from base URLs

- Verify the hostname is correct (no typos)

- Ensure URLs start with

Model Not Found Errors

Model Not Found Errors

Problem: Errors indicating the specified model doesn’t exist or isn’t available.Solution Steps:

-

Verify Model Name:

- Check the exact model identifier from provider documentation

- Model names are case-sensitive

- Ensure no extra spaces or characters in the model name

-

Check Model Availability:

- Confirm the model is available in your region

- Verify your subscription/account has access to the model

- Check if the model has been deprecated or renamed

-

Provider Documentation:

- OpenAI: Check platform.openai.com/docs/models

- Anthropic: Check docs.anthropic.com/claude/docs/models-overview

- Azure OpenAI: Use your deployment name from Azure portal

- Vertex AI: Check Google Cloud console for available models

EPAM AI Dial Specific Issues

EPAM AI Dial Specific Issues

Problem: EPAM AI Dial integration failures with personal tokens.Common Issues:

- Limited Model Access: EPAM AI Dial personal tokens provided to EPAMers have access to a very limited model list

- Permission Issues: Token may lack necessary permissions for specific models

- Token Scope: Personal tokens may have restricted capabilities

-

Verify Model Access:

- Review the AI Dial documentation for permission check procedures

- Execute permission verification requests for your specific model

- Confirm your token has access to the model you’re trying to use

-

Token Management:

- Check token expiration date

- Verify token permissions and scopes

- Consider using project-level tokens if available for broader model access

-

Contact Support:

- Contact EPAM AI Dial administrators if additional model access is required

- Request specific model permissions if needed

Credential Configuration Not Appearing

Credential Configuration Not Appearing

Problem: Newly created credentials don’t show up in dropdown menus or integration lists.Solution Steps:

-

Verify Creation:

- Confirm the credential was successfully saved

- Check for any error messages during creation

- Refresh the page or credential list

-

Check Permissions:

- Ensure you have permissions to view the credential

- Verify you’re viewing the correct project scope

- Check if credential is marked as “Local” vs “Shared”

-

Credential Type:

- Confirm the credential type matches the integration requirement

- Verify the credential hasn’t been deleted by another user

Rate Limiting or Quota Errors

Rate Limiting or Quota Errors

Problem: Receiving rate limit or quota exceeded errors from AI providers.Solution Steps:

-

Check Usage Limits:

- Review your provider’s rate limits and quotas

- Check your current usage in the provider’s dashboard

- Verify your subscription tier and limits

-

Implement Best Practices:

- Use appropriate tier models (Low-tier for routine tasks)

- Implement retry logic with exponential backoff

- Consider upgrading your plan if consistently hitting limits

-

Monitor Usage:

- Track API usage across your team

- Set up alerts for approaching quota limits

- Consider using project-level credentials for better visibility