Overview

When you access Personalization from your user profile menu, you’ll find your user information at the top, followed by three main configuration sections organized as expandable accordions:- General: Set default AI personality and custom instructions

- Default Context Management: Control conversation memory and token usage

- Default Summarization: Configure automatic conversation summarization

- Comprehensive Control: Manage AI behavior, memory, and summarization in one place

- Automatic Application: Settings apply to all new conversations automatically

- Consistency: Maintain uniform AI behavior across all projects and conversations

- Efficiency: Configure once, use everywhere—no repeated setup

- Optimization: Balance conversation quality with token usage and costs

Accessing Personalization

The Personalization page is accessed through your user profile menu. Navigation Path- Click on your User Avatar at the bottom of the main sidebar

-

Select Personalization from the dropdown menu

Settings Overview

The Personalization page is organized into four accordion sections, each controlling a different aspect of AI behavior.| Section | Purpose | Key Settings | When Applied | Can Change Mid-Conversation | Available |

|---|---|---|---|---|---|

| General | Define AI personality and behavior | Default Personality, Default User Instructions | At conversation creation | ✘ No | ✔️ |

| Default Context Management | Manage conversation memory and token usage | Enable Toggle, Max Context Tokens, Preserve Recent Messages | Throughout conversation lifecycle | ✔️ Yes (via Context Budget widget) | ✔️ |

| Default Summarization | Automatically condense long conversations | Enable Toggle, Summarization Instructions, Target Summary Tokens | During conversation when thresholds reached | ✔️ Yes (via Context Budget widget) | ✔️ |

| Long-term Memory | Manage what the AI remembers across conversations | — | — | — | 🔜 Coming Soon |

GENERAL

The General accordion section provides two configuration options that control the AI assistant’s default behavior and communication style.

Default Personality

Select the communication style and approach that the AI assistant will use by default in all new conversations. Available Personality Options| Personality | Communication Style | Best For |

|---|---|---|

| Generic | Balanced, professional assistant | General-purpose tasks, standard workflows, versatile applications |

| QA | Precise, technical, testing-focused | Quality assurance tasks, testing workflows, technical validation |

| Nerdy | Technical deep-dives, detailed explanations | Complex technical topics, learning new concepts, in-depth analysis |

| Quirky | Creative, playful, thinking outside the box | Brainstorming sessions, creative problem-solving, innovative approaches |

| Cynical | Skeptical, challenges assumptions | Critical analysis, risk assessment, design reviews |

| None | No personality overlay applied | When you prefer the AI’s default behavior without any personality customization |

Default User Instructions

Provide custom guidelines that automatically apply to all new conversations. These instructions define specific requirements, preferences, or constraints that the AI assistant should follow in every interaction. Example Instructions by RoleSoftware Developer

Software Developer

QA Engineer

QA Engineer

Technical Writer

Technical Writer

DEFAULT CONTEXT MANAGEMENT

The Default Context Management accordion section controls how conversation history is managed in all new conversations. These settings optimize token usage while preserving conversation continuity.- Quality: More context helps AI provide relevant, coherent responses

- Cost: Token usage directly affects API costs

- Performance: Excessive context can slow response times

- Limits: AI models have maximum token limits (context windows)

Configuration Parameters

| Parameter | Type | Default | Range | Description |

|---|---|---|---|---|

| Enable context management for new conversations | Toggle | ON | ON/OFF | Activates automatic context management |

| Max Context Tokens | Number | 64000 | 1000 - 10000000 | Maximum tokens allocated for conversation history |

| Preserve Recent Messages | Number | 5 | 1 - 99 | Minimum recent messages always retained in context |

Enable Context Management Toggle to enable or disable automatic context management for new conversations. When Enabled:

- Context is automatically managed based on Max Context Tokens setting

- System preserves recent messages as specified

- Older messages are automatically summarized or removed when token limit is approached

- Conversation continuity is maintained efficiently

- All conversation history is sent with each request (until model limit reached)

- No automatic context optimization occurs

- May hit model token limits in longer conversations

- Higher token costs and potential performance issues

Max Context Tokens Specifies the maximum number of tokens to use for conversation context in each AI request.

- Basic units of text that AI models process

- Approximately: 1 token ≈ 4 characters or ≈ 0.75 words in English

- Example: “Hello, how are you?” ≈ 5-6 tokens

- Both input (context) and output (response) count toward model limits

Consider Your Model

Consider Your Model

- gpt-4.1: 128,000 tokens

- GPT-4: 8,192 tokens (standard) or 32,768 tokens (32k version)

- GPT-3.5 Turbo: 16,385 tokens

- System instructions and prompts (your default instructions count here)

- AI’s response generation

- Safety margins to prevent hitting hard limits

Balance Quality vs Cost

Balance Quality vs Cost

-

Higher Values (100,000+):

- ✔️ Better context retention for very long conversations

- ✔️ AI can reference information from much earlier in conversation

- ✘ Higher token costs per request

- ✘ Slower response times

-

Medium Values (10,000-64,000):

- ✔️ Good balance of quality and cost (recommended)

- ✔️ Suitable for most use cases

- ✔️ Efficient performance

-

Lower Values (1,000-10,000):

- ✔️ Minimal token costs

- ✔️ Faster responses

- ✘ May lose context in longer conversations

- ✘ AI may “forget” earlier discussion points

Adjust by Use Case

Adjust by Use Case

- Short Q&A Sessions: 10,000-20,000 tokens (quick questions, brief interactions)

- Standard Development: 30,000-64,000 tokens (typical coding, documentation tasks)

- Long Technical Discussions: 64,000-128,000 tokens (complex debugging, architecture reviews)

- Extensive Analysis: 100,000+ tokens (large codebase reviews, comprehensive research)

Preserve Recent Messages Specifies the minimum number of recent conversation messages to always keep in context, regardless of token limits.

- Priority Protection: These messages are never removed or summarized, even if total tokens exceed Max Context Tokens

- Recent Context: Ensures AI always has immediate conversation context

- Continuity: Maintains coherent responses even when older context is summarized

- Count Method: Each user message and corresponding AI response counts as 2 messages

Consider Conversation Style

Consider Conversation Style

-

Quick Q&A (1-3 messages):

- Each question is independent

- Minimal context dependency

- Lower value sufficient

-

Iterative Development (5-10 messages):

- Building on previous responses

- Code refinements and iterations

- Medium value recommended (default 5 works well)

-

Complex Problem-Solving (10-20 messages):

- Multi-step troubleshooting

- Extended debugging sessions

- Higher value ensures continuity

Balance with Token Limits

Balance with Token Limits

- Recent messages are always kept, even if they exceed Max Context Tokens

- If 5 recent messages contain 70,000 tokens but Max Context Tokens = 64,000:

- All 5 recent messages are still preserved

- Only older messages beyond these 5 are subject to token limits

- Set this value carefully to avoid unintentionally high token usage

Testing Recommendations

Testing Recommendations

- Start with default (5) and monitor conversation quality

- Increase if: AI loses track of recent discussion points

- Decrease if: Token costs are too high and conversations are short

- Monitor: Check how often you need to re-explain recent context

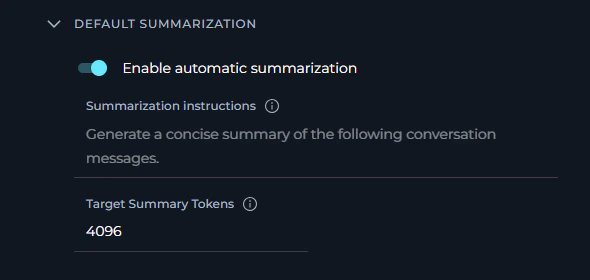

DEFAULT SUMMARIZATION

The Default Summarization accordion section configures automatic conversation summarization for new conversations. When enabled, long conversations are automatically condensed to maintain context while reducing token usage.- Detects when conversation length reaches a threshold

- Generates a concise summary of older messages

- Replaces older messages with the summary

- Preserves recent messages (as specified in Context Management)

- Maintains conversation continuity while reducing tokens

Configuration Parameters

| Parameter | Type | Default | Range/Options | Description |

|---|---|---|---|---|

| Enable automatic summarization | Toggle | ON | ON/OFF | Activates automatic conversation summarization |

| Summarization instructions | Multiline text | ”Generate a concise summary of the following conversation messages.” | - | Custom instructions for summary generation |

| Target Summary Tokens | Number | 4,096 | 100 - 4,096 | Target token count for generated summaries |

Enable Automatic Summarization When Enabled:

- System monitors conversation length continuously

- Automatically triggers summarization when threshold is reached

- Older messages are condensed into a summary

- Recent messages remain untouched

- Token usage is optimized for long conversations

- No automatic summarization occurs

- Full conversation history is maintained (subject to Context Management limits)

- May hit token limits faster in extended conversations

- Manual context management may be required

Summarization Instructions Custom instructions that guide how conversation summaries are generated. Default Instructions:

Technical Discussions

Technical Discussions

Problem-Solving Sessions

Problem-Solving Sessions

Target Summary Tokens Specifies the target length (in tokens) for generated conversation summaries. Token Reference:

- 256 tokens: ~192 words - short paragraph summary

- 1024 tokens: ~768 words - moderate detail summary

- 4096 tokens (default): ~3072 words - comprehensive summary

- Messages 1-20: 45,000 tokens

- Summary of messages 1-15: ~4,096 tokens (Target Summary Tokens)

- Original messages 16-20: 15,000 tokens (preserved recent messages)

- Total Context: ~19,096 tokens (reduced from 45,000)

Brief Summaries (100-512 tokens)

Brief Summaries (100-512 tokens)

- Conversations are highly repetitive

- Maximum token savings needed

- Simple Q&A that doesn’t require much context

- ✔️ Maximum token reduction

- ✔️ Lowest summarization costs

- ✔️ Fastest summary generation

- ✘ May lose important details

- ✘ AI may need clarification more often

Moderate Summaries (512-2048 tokens)

Moderate Summaries (512-2048 tokens)

- Balance detail retention with token savings

- Standard technical discussions

- General-purpose conversations

- ✔️ Good balance of detail and conciseness

- ✔️ Preserves key points and decisions

- ✔️ Reasonable token costs

- ✔️ Suitable for most use cases

Comprehensive Summaries (2048-4096 tokens - default)

Comprehensive Summaries (2048-4096 tokens - default)

- Complex technical discussions

- Multi-topic conversations

- Conversations with critical context that must be preserved

- Detailed problem-solving sessions

- ✔️ Maximum detail preservation (default)

- ✔️ Rich context for AI to reference

- ✔️ Better continuity across long conversations

- ✘ Higher summarization costs

- ✘ Summaries themselves consume significant tokens

How the Sections Work Together

Example Scenario: Long Development Session-

GENERAL (Foundation):

- Personality: “Nerdy” - Technical deep-dives

- Instructions: “Use TypeScript, include unit tests, explain trade-offs”

- Result: AI uses technical language and provides detailed code examples

-

DEFAULT CONTEXT MANAGEMENT (Efficiency):

- Max Context Tokens: 64,000

- Preserve Recent Messages: 5

- Result: AI can reference extensive history (up to 64K tokens) while always keeping last 5 exchanges

-

DEFAULT SUMMARIZATION (Optimization):

- Enabled: Yes

- Target Tokens: 4,096

- Result: When the context approaches the token limit, older messages are automatically condensed into a 4K token summary

- AI maintains technical, detailed communication style throughout (General settings)

- Conversation can continue indefinitely without hitting token limits (Context Management + Summarization)

- Recent context always available for coherent responses (Preserve Recent Messages)

- Token costs optimized by automatic summarization of older content

- Consistent quality even in very long debugging or development sessions

How Settings Apply

Best Practices

Configuring All Three Sections TogetherStart with Defaults, Then Customize

Start with Defaults, Then Customize

-

Test Default Settings First:

- General: Generic personality, no custom instructions

- Context Management: Enabled, 64,000 tokens, 5 preserved messages

- Summarization: Enabled, 4,096 target tokens

-

Monitor Conversation Quality:

- Are responses in the style you need?

- Do you hit token limits?

- Does summarization maintain enough detail?

-

Adjust One Section at a Time:

- Change personality or add instructions first

- Adjust context limits if needed

- Fine-tune summarization last

-

Document What Works:

- Keep notes on effective configurations

- Track which settings work for which types of tasks

Understand Section Dependencies

Understand Section Dependencies

- Disabling Context Management also disables the Max Context Tokens and Preserve Recent Messages inputs — they become grayed out and uneditable

- Summarization is only active when Context Management is enabled — if you disable context management, summarization settings are also inactive even if the toggle is on

- General settings are always independent — personality and instructions apply regardless of context management state

Consider Your Conversation Patterns

Consider Your Conversation Patterns

- Focus on General settings (personality + instructions most important)

- Context Management: Lower token limits (20,000-30,000) to save costs

- Summarization: Can disable or leave at defaults for short conversations

- All three sections important

- Context Management: Standard limits (40,000-64,000)

- Summarization: Default settings work well

- Context Management: Higher limits (64,000-128,000)

- Summarization: Critical for cost control

Balance Quality, Cost, and Performance

Balance Quality, Cost, and Performance

Align with Primary Use Case

Align with Primary Use Case

- Single Role: Choose the personality that best matches your main work function

- Multiple Roles: Select the personality you use most frequently

- Team Accounts: If shared, choose Generic for balanced, versatile interactions

- No Preference: Use None to keep the AI’s raw default behavior without any style overlay

- Experimentation: Try different personalities over a few days to find what works best

Consider Team Communication Style

Consider Team Communication Style

- Formal Environments: Generic or QA for professional, technical communication

- Creative Teams: Quirky for innovative, out-of-the-box thinking

- Technical Teams: Nerdy for deep, detailed technical discussions

- Critical Review: Cynical for thorough, skeptical analysis

Personality Can Be Overridden

Personality Can Be Overridden

- Personalization sets the default for all new conversations

- Individual conversations can have different settings if needed

- No need to frequently change your default unless your work focus shifts

Writing Effective Default Instructions

Be Specific and Actionable

Be Specific and Actionable

- ✔️ “Always include code examples in Python with type hints”

- ✔️ “Structure responses with a summary paragraph first, then details”

- ✔️ “Check for security vulnerabilities in all code suggestions”

- ✘ “Be helpful” (too generic, no clear action)

- ✘ “Give good answers” (subjective, no specific guidance)

- ✘ “Make things clear” (ambiguous, means different things to different people)

Keep Instructions Focused

Keep Instructions Focused

- Prioritize: Include only the most important requirements

- Length: Aim for 3-7 key points (typically 100-300 words)

- Clarity: Use clear, direct language without ambiguity

- Relevance: Focus on instructions that apply broadly to your work

- Confuse the AI with conflicting requirements

- Reduce response quality due to complexity

- Make it harder to maintain and update over time

Use Consistent Terminology

Use Consistent Terminology

- Standards: Reference specific frameworks, style guides, or methodologies

- Example: “Follow PEP 8 for Python code” instead of “use good Python style”

- Technical Terms: Use precise technical vocabulary

- Example: “Use async/await pattern” instead of “make it asynchronous”

- Format: Specify exact formats

- Example: “Use ISO 8601 date format (YYYY-MM-DD)” instead of “use standard dates”

- Examples: Include brief examples for complex requirements

Structure Instructions Logically

Structure Instructions Logically

Review and Update Regularly

Review and Update Regularly

- Monthly Review: Check if instructions still match your current needs

- Project Changes: Update when starting new types of projects or roles

- Team Feedback: Adjust based on conversation quality and outcomes

- Refinement: Simplify or clarify instructions that aren’t working well

- Version Control: Keep notes on what changes you make and why

Testing Your Personalization Settings

Test Incrementally

Test Incrementally

- Start Simple: Begin with just personality selection, no custom instructions

- Add Instructions Gradually: Add one instruction at a time

- Evaluate Each: Use the new settings in several conversations before adding more

- Document: Keep notes on what works well and what doesn’t

- Iterate: Refine based on actual conversation outcomes

Create Test Conversations

Create Test Conversations

- Test Personality: Create a new conversation and ask open-ended questions to see personality in action

- Test Instructions: Ask the AI to perform tasks that should trigger your custom instructions

- Compare Responses: Try same question with different personality settings to see differences

- Edge Cases: Test with requests that might conflict with your instructions

Troubleshooting

Settings Not Applying to New Conversations

Settings Not Applying to New Conversations

- New conversations don’t reflect configured personality

- Default instructions not being followed in new conversations

- AI behavior seems unchanged after making changes

- Personality dropdown: saves immediately when you select a new option

- Text fields: save when you click/tab out of the field

- Numeric fields: save on blur, but only if the entered value is within the valid range

- Toggles: save immediately on toggle

- Check whether a “Settings saved successfully” toast appeared after each change — if not, the save may not have triggered

- Check whether a “Failed to save settings” error appeared — indicates a network or server issue

- Verify you are testing in a brand new conversation — existing conversations retain their original settings

- For numeric fields, verify the values are within the allowed ranges (see Configuration Parameters tables)

- Confirm settings are visible when reopening the Personalization page after the save notification appeared

- Re-enter any field value and click outside to trigger auto-save again

- Refresh the page and re-apply any changes that weren’t confirmed with a toast

- Create a brand new conversation (do not continue an existing one) to test the new settings

- Clear browser cache if the page is loading stale data: Ctrl+Shift+Delete (Windows) or Cmd+Shift+Delete (Mac)

Numeric Fields Not Saving (Silent Failure)

Numeric Fields Not Saving (Silent Failure)

- You type a new value for Max Context Tokens, Preserve Recent Messages, or Target Summary Tokens

- No “Settings saved successfully” toast appears after clicking away

- The field may show a red validation error message

| Field | Min | Max |

|---|---|---|

| Max Context Tokens | 1,000 | 10,000,000 |

| Preserve Recent Messages | 1 | 99 |

| Target Summary Tokens | 100 | 4,096 |

- Look for a red error message directly below the field

- Correct the value to be within the valid range

- Click outside the field — the save will trigger automatically once the value is valid

- Wait for the “Settings saved successfully” confirmation toast

Context Management Fields Are Grayed Out

Context Management Fields Are Grayed Out

- Max Context Tokens and Preserve Recent Messages fields appear grayed out and cannot be edited

- Summarization settings appear inactive

- Scroll up to the Default Context Management accordion

- Enable the “Enable context management for new conversations” toggle

- The grayed-out fields will become editable immediately

- The toggle saves automatically — no further action needed

AI Not Following Default Instructions

AI Not Following Default Instructions

- AI responses don’t follow specified guidelines

- Instructions appear to be partially followed or ignored

- Inconsistent behavior across different conversations

- Instructions Too Complex: Conflicting or contradictory requirements

- Instructions Too Vague: Ambiguous guidelines that AI can’t interpret clearly

- Model Limitations: Some instructions may be beyond model’s current capabilities

- User Messages Override: Your questions contradict default instructions

-

Simplify Instructions:

- Reduce to 3-5 key points

- Make each instruction specific and actionable

- Remove any conflicting requirements

- Test with minimal instructions first, then add complexity

-

Clarify Requirements:

- Replace vague terms (“good”, “clear”, “helpful”) with specific examples

- Use concrete technical terminology

- Provide examples of desired behavior in the instructions themselves

-

Check for Conflicts:

- Review instructions for contradictions

- Ensure personality choice aligns with instruction style

- Test with Generic personality to isolate instruction issues

-

Test Incrementally:

- Start with one instruction, verify it works in a new conversation

- Add instructions one at a time

- Identify which instruction causes problems

- Refine problematic instruction before adding more

Personality Selection Not Visible in Responses

Personality Selection Not Visible in Responses

- All personalities seem to behave the same way

- Communication style doesn’t match selected personality

- Can’t tell the difference between Generic and other personalities

- Personality differences are subtle and contextual

- Some tasks (e.g., simple data retrieval, calculations) don’t show personality variation

- Personality affects tone, approach, and detail level, not factual accuracy

- Personality Affects: Tone of voice, level of technical detail, approach to problem-solving, communication style, enthusiasm level

- Personality Doesn’t Affect: Factual accuracy, basic task completion, data retrieval, calculations

- Most Visible In: Complex explanations, recommendations, creative tasks, problem-solving approaches, code reviews

- Ask Open-Ended Questions: “How should I approach optimizing this code?” or “What are the trade-offs?”

- Request Analysis: “Analyze this architecture” or “Review this design”

- Compare Side-by-Side: Create conversations with different personalities, ask the same complex question

- Use Creative Tasks: Brainstorming, problem-solving, or design discussions show personality most clearly

Failed to Save Settings Error Appears

Failed to Save Settings Error Appears

- A red “Failed to save settings” toast notification appears after making a change

- The change was made successfully in the UI but was not persisted

- Network connectivity issues

- Session timeout (logged out)

- Server error or maintenance

- Browser issues or extensions blocking the request

- Check Network: Ensure stable internet connection

- Refresh Page: Reload page and re-apply the change (Ctrl+R or Cmd+R)

- Check Login: Verify you’re still logged in — re-authenticate if needed

- Try Different Browser: Test in incognito/private mode or different browser

- Clear Cache: Clear browser cache and cookies

- Check Console: Open browser developer tools (F12) → Console tab to see any error messages

- Contact Support: If the issue persists, contact your administrator with error details from the console

- Context Management - Manage conversation memory and token usage

- Chat Functionality - Using chat features and conversations

- Creating Agents - Configure agents with custom instructions